|

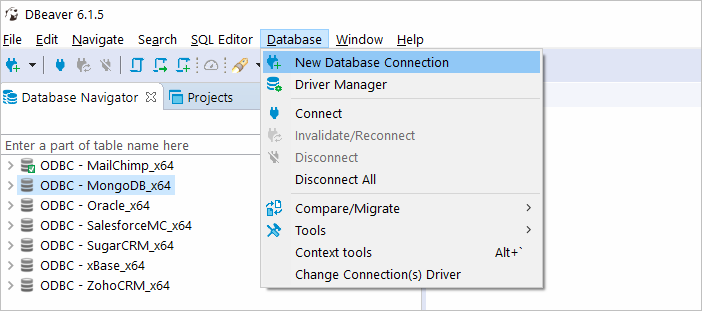

Select(tailnum, depdelay, arrdelay, dest) If you are interested in all flights that make up more than 60 minutes during their flight, use the following example: flights %>% MyRedshift % operator provides an alternative way of calling dplyr functions that you can read from left to right, and is very intuitive to use. install.packages("RJDBC")ĭownload.file('','RedshiftJDBC41-1.jar') This driver is based on the PostgreSQL JDBC driver but optimized for performance and memory management. Choose the latest JDBC driver provided by AWS ( Configure a JDBC Connection). In R, you can install the RJDBC package to load the JDBC driver and send SQL queries to Amazon Redshift. The recommended connection method is using a client application or tool that executes SQL statements through the PostgreSQL ODBC or JDBC drivers. The EC2 instance will be located next to your Amazon Redshift cluster, with reduced latency for your JDBC connection.Ĭonnecting R to Amazon Redshift with RJDBCĪs soon as you have an R session and the data loaded to Amazon Redshift, you can connect them. I recommend running R on an Amazon EC2 instance using the Amazon Linux AMI as described in Running R on AWS. Your IT department or database administrator can provide you with more details about your Amazon Redshift installation. Depending on your Amazon Redshift configuration, there are several options: Your R environmentīefore running R commands, you have to decide where to run your R session. Be aware that the COPY command executes a parallel load for each file of the data located in Amazon S3, which accelerates the loading process. SELECT count(*) FROM flights /* 123.534.969 */įor these steps, I recommend connecting to the Amazon Redshift cluster with SQLWorkbench/J ( Connect to Your Cluster by Using SQL Workbench/J). *If you are using default IAM role with your cluster, you can replace the ARN with default as below CREATE TABLE flights(Īctualelapsedtime integer encode bytedict,ĬOPY flights FROM 's3://data-airline-performance/' For more information on allowing Redshift cluster to access other AWS services please go through this documentation. For this demo purpose, our cluster has a IAM_Role attached to it which has access to the S3 bucket. There are nearly 120 million records in total, which take up 1.6 gigabytes of space compressed and 12 gigabytes when uncompressed.Ĭopy the data to an Amazon S3 bucket, in same region as your Amazon Redshift cluster, and create the tables and load the data with the following SQL commands. This data set consists of flight arrival and departure details for all commercial flights within the USA, from October 1987 to April 2008. To demonstrate the power and usability of R for analyzing data in Amazon Redshift, this post uses the “Airline on-time performance” () data set as an example.

You can access the fields by logging into the AWS console, choosing Amazon Redshift, and then selecting your cluster. In the meantime, see the Amazon Redshift documentation for more details about security, VPC, and data encryption ( Amazon Redshift Security Overview).įor working with the cluster, you need the following connection information: Discussing the available security mechanisms could be a separate blog post all by itself, and would add too much to this one. If you run an Amazon Redshift production cluster, you might not choose this option. Start an Amazon Redshift cluster ( Step 2: Launch a Sample Amazon Redshift Cluster) with two dc1.large nodes and mark the Publicly Accessible field as Yes to add a public IP to your cluster. In this post, I describe some best practices for efficient analyses of data in Amazon Redshift with the statistical software R running on your computer or Amazon EC2.

For more tips on installing and operating R on AWS, see Running R on AWS. R is the fastest growing analytics platform in the world, and is established in both academia and business due to its robustness, reliability, and accuracy.

Due to its flexible package system and powerful statistical engine, R can provide methods and technologies to manage and process a big amount of data. R is an open source programming language and software environment designed for statistical computing, visualization, and data analysis. Business intelligence and analytic teams can use JDBC or ODBC connections to import, read, and analyze data with their favorite tools, such as Informatica or Tableau. AWS customers are moving huge amounts of structured data into Amazon Redshift to offload analytics workloads or to operate their DWH fully in the cloud. Markus Schmidberger is a Senior Big Data Consultant for AWS Professional ServicesĪmazon Redshift is a fast, petabyte-scale cloud data warehouse for PB of data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed